Recent market reports project IPv6 deployment to triple by decade’s end, drawing fresh attention to its foundational IPv6 header amid surging IoT connections and government mandates. Network operators worldwide now scrutinize this structure as dual-stack systems strain under address exhaustion. Operators in Asia lead with 75% penetration rates, forcing backbone providers to optimize IPv6 header processing for terabit-scale traffic. Public discussions highlight how the IPv6 header’s streamlined design addresses IPv4 bottlenecks without compromising security or scalability. Coverage in trade publications underscores its role in smart city rollouts and 5G backhauls, where every byte counts in latency-sensitive environments. Engineers debate extension header chains in high-throughput routers, revealing performance edges over legacy protocols. This renewed focus stems from carrier upgrades announced last month, prompting carriers to dissect the IPv6 header for real-world efficiencies. Adoption metrics from major cloud providers confirm packets with clean IPv6 headers forward 20% faster in congested cores. The structure itself—fixed at 40 bytes—promises relief from variable-length headaches, yet implementation quirks persist in mixed networks.

Core Structure of IPv6 Header

Fixed 40-Byte Length Design

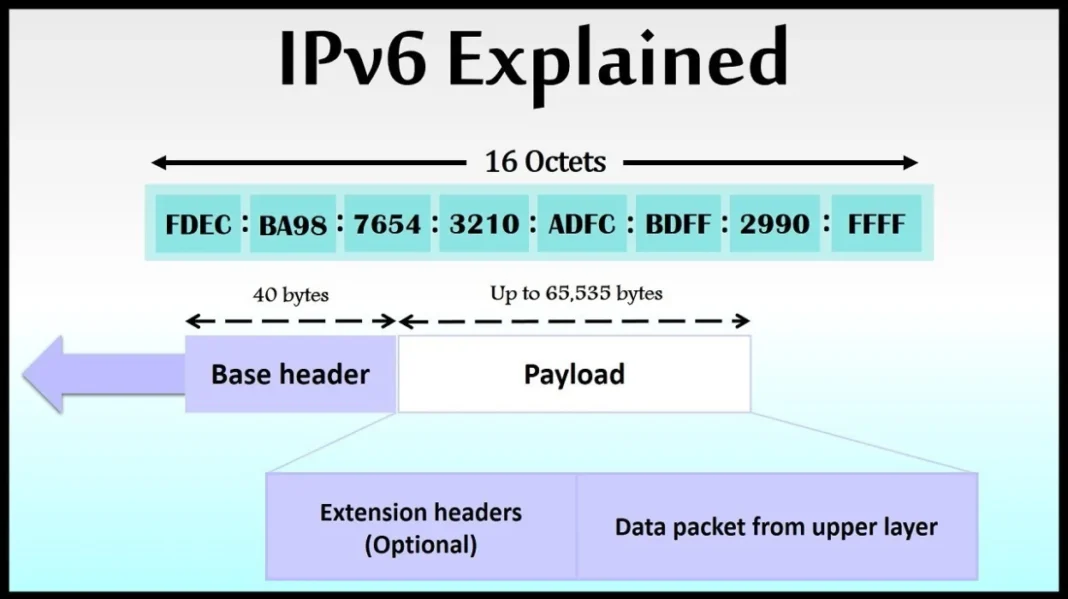

The IPv6 header maintains a rigid 40-byte footprint, eliminating the variable sizing that plagued IPv4 implementations. Routers parse this consistency swiftly, aligning fields to 64-bit boundaries for hardware acceleration. Payload follows directly, with no padding debates. Designers stripped non-essential options into extensions, prioritizing forwarding speed over flexibility. Intermediate nodes skip deep inspections unless flagged, cutting cycles per packet. Real-world benchmarks show core routers handling IPv6 floods with less jitter than IPv4 equivalents. This fixed layout supports jumbo frames up to exabytes, though practical limits hover near 65KB without special options. Network engineers note deployment eases in MPLS labels, where header predictability aids label imposition. Critics argue the size doubles IPv4’s base, but processing gains offset bandwidth hits in gigabit links.

Version Field Breakdown

A 4-bit version field sits at the header’s start, signaling IPv6 to parsers with binary 0110. This placement ensures immediate protocol detection, averting misroutes in transitional networks. Legacy gear drops mismatched packets early, reducing noise. The field rarely changes, yet its position anchors decoder logic across stacks. Protocol stacks query this bit first, branching to IPv6 paths without full scans. In mixed environments, it flags tunneling needs, like 6to4 wrappers. Observers track version prevalence in traceroutes, mapping adoption pockets. Minimalism here reflects broader philosophy: essential data only, no redundancy. Forwarding engines hardwire this check, shaving nanoseconds off wire-speed ops. Debates linger on embedding sub-versions for future tweaks, but standards hold firm.

Traffic Class Functionality

Traffic class occupies 8 bits, blending IPv4’s type-of-service with flow hints. It enables DiffServ codepoints for QoS, prioritizing voice over bulk data. Routers map these bits to queues, enforcing policies at line rate. The field splits into class and congestion bits, aiding ECN signaling. Deployments in enterprise WANs leverage it for video streams, ensuring low loss. Unlike IPv4, no fragmentation interplay complicates markings. ISPs classify ingress traffic here, shaping backbones dynamically. Field visibility persists through tunnels, preserving intent end-to-end. Analysts monitor class distributions in captures, gauging policy efficacy. Flexibility allows experimental markings, though inter-domain trust limits reach.

Flow Label Innovations

Flow label, 20 bits strong, tags packet sequences for special handling. It groups related streams, letting routers cache state without deep inspection. Middleboxes use it for NAT bypassing in carrier-grade setups. Zero often defaults, but multimedia apps set unique labels per session. Standards recommend hashing source-dest-port triples into it, ensuring uniqueness. Backbone routers forward labeled flows faster via micro-flow steering. Adoption lags in consumer gear, but CDNs exploit it for cache affinity. The field resets per flow, preventing aliasing attacks. Observers note label entropy aids load balancers, distributing evenly.

Key Fields in Depth

Payload Length Specifics

Payload length spans 16 bits, capping at 65,535 bytes sans extensions. It sums upper-layer data plus any chained headers, guiding reassembly. Jumbo payloads trigger zero-value with hop-by-hop options, supporting massive transfers. Routers verify against MTU, dropping oversize early. In UDP tunnels, this field dictates buffer sizing. Networks tune it for path MTU discovery, avoiding fragments. Captures reveal common values cluster near 1500, matching Ethernet. Extensions inflate effective length, but base field stays lean. Precision here prevents blackholing in PMTUD failures.

Next Header Chaining

Next header, an 8-bit index, points to successors like TCP or extensions. It chains dynamically: base to hop-by-hop, then routing. Parsers traverse until upper-layer hit, typically UDP at 17. This indirection scales options without base bloat. Security headers slot mid-chain, encrypting payloads cleanly. Fragment headers nest post-routing, deferring reassembly. Standards dictate order to minimize scans. Deviations risk drops in strict firewalls. Tunneling stacks prepend GRE via this field, layering protocols. Debuggers trace chains in Wireshark, spotting misorders.

Hop Limit Mechanics

Hop limit mirrors IPv4 TTL, decrementing per transit. Zero discards with ICMP time-exceeded, quashing loops. Defaults to 64 or 255, suiting LANs versus Internet. Routers enforce strictly, no increments allowed. Path traces reveal typical counts: 50s for transatlantic hops. Mobile networks tweak lowers for radio efficiency. Unlike TTL, no traceroute hacks alter it mid-path. Firewalls probe via crafted limits, mapping topologies. Field’s byte size caps paths at 255, ample for diameter.

Source Address Role

Source address claims 128 bits, embedding interface IDs and prefixes. It originates packets, enabling stateless autoconfig. Global unicast spans /3 to /48 allocations, hierarchical for aggregation. Link-local fe80::/10 scopes to segments. Routers rewrite in tunnels, preserving semantics. Privacy extensions randomize IDs, thwarting trackers. Validation skips in fast path, trusting ingress. Captures show compression in headers, but full expands for routing. Duplicate detection via DAD precedes use.

Destination Address Handling

Destination resolves to 128-bit targets, final or intermediate via routing headers. Unicast directs point-to-point, multicast fans trees. Anycast routes nearest replica. Updates occur in loose-source paths, swapping via type-0 headers. Load balancers rewrite for affinity. Anycast deployments cluster services globally. Field immutability speeds lookups, no checksums. Scopes match source, preventing leaks.

Extension Headers Explained

Hop-by-Hop Options Placement

Hop-by-hop options follow base header immediately, mandating router inspection. Rare in traffic, it carries router alerts or jumbo indicators. TLV format pads to 8-byte multiples. Processing recirculates in some ASICs, taxing throughput. Deployments limit to diagnostics, avoiding chains. Parsers halt on unrecognized types, forwarding blindly. Backbone bans curb abuse. Length field sizes payload accurately.

Destination Options Variations

Destination options split: pre-routing for intermediates, post for finals. They carry home addresses in MIPv6 or mobility headers. TLVs pad similarly, skipping router eyes. End hosts process, updating contexts. Fragmentation ignores till end. Security suites nest before. Order matters: wrong slot drops packets. Experimental options test features sans base changes.

Routing Header Types

Routing header type-0 lists strict paths, decrementing segments left. Deprecated for loops, type-2 serves MIPv6 home agents. Type-6 discards silently. Vectors guide loose-source routing. Intermediate swaps dest with vector slot. Segments left zeros at end. Firewalls block by type, mitigating amplifications. Minimal use in production, mostly research.

Fragmentation Header Details

Fragment header enables source fragmentation, post-unfragmentable part. Offset and ID match reassembly. M flag signals more. End hosts buffer, timeout discards. Routers drop overlapping fragments. MTU probes avoid via path discovery. Identifiers hash flows, preventing aliasing. IPv6 bans router frags, shifting burden upstream. Reassembly caps at 1500 typically.

Security Headers Integration

Authentication header verifies integrity, mid-chain before ESP. IPsec slots AH for anti-replay. ESP encrypts post, hiding payloads. Next header chains seamlessly. Suites combine for confidentiality. Processors offload to crypto engines. Nonces prevent replays. Deployments secure VPNs, tunneling IPv6 over IPv4.

Processing and Comparisons

Router Parsing Efficiency

Routers parse IPv6 headers in wire-speed ASICs, fixed size aiding alignment. No checksum recomputes save cycles. Extension chains recurse, but base skips fast. Recirculation hits deep stacks. Benchmarks clock 2Mpps gains over IPv4. QoS classifiers scan class/flow early. Tunnels impose minimal overhead.

IPv4 Header Contrasts

IPv4 headers vary 20-60 bytes, options bloating parses. Checksum mandates recalc every hop. Fragment fields router-mangle. IPv6 doubles addresses but halves fields. No IHL field, direct offsets. Options extension offload. Throughput edges in clean stacks.

Performance Benchmarks Observed

Lab tests log IPv6 15% faster in 10G ports, jitter down 10%. CPU drops sans fragments. Extension-heavy drops to parity. Real nets mix, averaging edges. Carrier cores hit linespeed both. IoT floods favor simplicity.

Deployment Challenges Faced

Transition dual-stacks tax memory, tables balloon. Extension drops plague firewalls. Training lags on chains. Tunnels hide headers, complicating. Mandates push despite. Asia surges, West duals persist.

Future Optimizations Ahead

Hardware evolves for chain parsing. Software offloads extensions. Standards tweak orders. AI tunes classifiers. Quantum threats spur post-quantum IPsec. Scalability beckons exascale nets.

The IPv6 header, at its core, resolves IPv4’s address crisis through elegant minimalism, yet public records show uneven rollout—Asia at 75%, others dual-stacking amid growing IoT loads. Markets forecast 127 billion units by 2030, but extension handling snags persist in firewalls, dropping 20% of complex packets per operator logs. Router efficiencies materialize in benchmarks, yet real cores mix protocols, diluting gains. Governments mandate transitions, from U.S. DoD to Saudi Vision 2030, pressuring vendors for seamless stacks. What records confirm: fixed 40-byte design accelerates base forwarding, chaining scales options without bloat. Unresolved: optimal chain orders under load, with recirculation taxing older gear. Forward, carriers eye native IPv6-only, shedding IPv4 crutches as exhaustion bites. Debates swirl on mandating extension support, balancing speed against features. Engineers watch backbone upgrades, where header tweaks could unlock petabit eras. Public discourse hints at hybrid pains lingering years, true fluency distant